The home for great

culture

culture

Publish on Substack and own your work

1

Russell Clark shares analysis and knowledge from 20 years working in the markets, specializing in hedge funds, macro and short selling.

Hundreds of paid subscribers · £35/month

2

Bad Brain from writer and widow Ashley Reese shares compulsive notes on life, death, pop culture, and the internet.

Hundreds of paid subscribers · $7/month

3

Receive bestselling author and podcast host Jonathan Fields’s Wake-up Call in your inbox every Sunday, and be revived by a new topic and prompt, so you don’t fall asleep at the wheel of your own life.

Launched 10 days ago · $5.99/month

4

U.K.-based charity Royal Literary Fund helps writers in financial need through grants, education, and outreach programmes. On Substack they share features about writing, writers, and their craft—from their fellows and staff.

Launched 6 months ago

5

Salty Popcorn delivers a dose of movie headlines, trailers and feature reviews (without spoilers) direct to your inbox every other Thursday—just in time for movie night.

Launched 3 years ago

Creating a new media ecosystem

You made it, you own it.

You always own your intellectual property, mailing list, and subscriber payments. With full editorial control and no gatekeepers, you can do the work you most believe in.

Create your Substack

Grow your audience.

Marketing isn’t all on your shoulders. More than 50% of all new free subscriptions and 25% of paid subscriptions to Substacks come from within our network.

Create your Substack

Let us handle everything else.

A Substack combines a website, blog, podcast, video tools, payment system, and customer support team — all integrated seamlessly in a simple interface. We handle the admin, billing, and tech so you can focus on making your best work.

Create your Substack

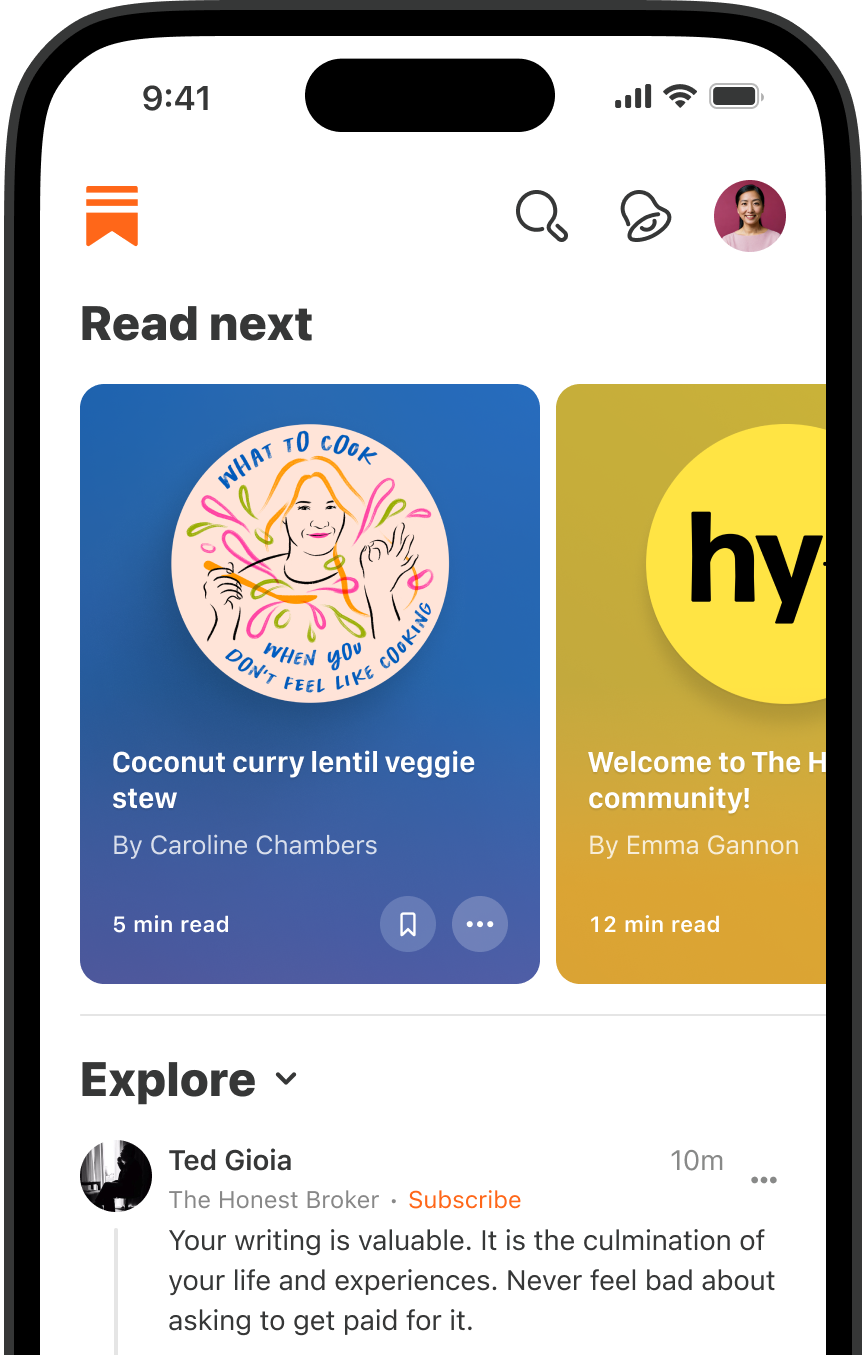

A world-class reading, watching, and listening experience

We make it easy to start a podcast—including video podcasts—, and produce voiceovers and narrations for your text posts. Share episodes to Substack subscribers and to all the major podcast players with one click.You can make your show free to everyone or you can use a paywall for: the whole podcast; select episodes; or at any point in a particular episode.

Create your Substack

Create a video post, or upload or record videos directly into a post. You can also turn your videos into a podcast, make videos available to everyone, or use a paywall for a whole or part of a video.

Create your Substack

On Substack, you’re not publishing into a void. Comments, Chat, Notes, direct messaging, and community threads connect you and your subscribers directly.

Create your Substack

“Starting a Substack was the best decision of my life.“

– Edwin Dorsey, The Bear Cave

Substack basics

What is a Substack?

Substack is much more than a newsletter platform. A Substack is an all-encompassing publication that accommodates text, video, audio, and video.. No tech knowledge is required. Anyone can start a Substack and publish posts directly to subscribers’ inboxes—in email and in the Substack app. Without ads or gatekeepers in the way, you can sustain a direct relationship with your audience and retain full control over your creative work.

Do I need to pay for Substack?

It’s free to get started on Substack. If you turn on paid subscriptions, Substack will keep a 10% cut of revenues for operating costs like building growth tools to help publishers, developing new features, and providing world-class customer support. There are no hidden fees and we only make money when publishers do.

Do I own what I publish on Substack?

You will always own your work, everything you publish, and your relationships with your subscribers. We make it easy to import and export your archive, subscriber list, and payments information to and from other platforms.

Will Substack help me grow my audience?

Yes. More than 50% of all new free subscriptions and 25% of paid subscriptions to Substacks come from within our network.

How do I move my past work to Substack?

If you already have an audience on Wordpress, Mailchimp, Beehiiv, Ghost, Medium, Tumblr, or another platform, you can easily import your posts and your email list in the Substack setup process.